Schedule

Notes

Data collection runs on a schedule by the agent which is listed below. Collections will also run on service start. If you need to refresh data prior to the scheduled collection, the only only way to do this is to restart the DBA Dash service.

Note The refresh button in the GUI will refresh the data from the repository that has already been collected from the agent. The agent collects data from your SQL Server instances. The GUI tool only is just used to report on data from the repository database - it doesn’t connect to your SQL instances directly. The GUI tool is packaged with the agent but can also be deployed separately.*

Schedule

Every 1min

- ObjectExecutionStats Captures object execution stats from sys.dm_exec_procedure_stats, sys.dm_exec_function_stats & sys.dm_exec_trigger_stats

- CPU Capture CPU utilization from sys.dm_os_ring_buffers or sys.dm_db_resource_stats (Azure).

- RunningQueries Captures a snapshot of queries currently executing. Captures blocking chains so replaces blocking snapshot. Also captures query text and optionally captures query plans

- IOStats Collects data from sys.dm_io_virtual_file_stats

- Waits Collects data from sys.dm_os_wait_stats

- PerformanceCounters Collects data from sys.dm_os_performance_counters. Collection can be customized, adding additional performance counters or collecting your own metrics with custom SQL.

- SlowQueries (Not enabled by default) Captures queries that take longer than 1second (or custom) to run using extended events

- JobHistory Collects job execution data from msdb.dbo.sysjobhistory (just what’s new since the last collection)

- DatabasesHADR Collects data from dm_hadr_database_replica_states if your SQL instance is using Always On Availability Groups.

- AvailabilityReplicas Collects data from sys.availability_replicas

- AvailabilityGroups Collects data from sys.availability_groups

- MemoryUsage Collects data from sys.dm_os_memory_clerks

- ResourceGovernorWorkloadGroups Collects data from sys.dm_resource_governor_workload_groups, only if workload groups have been configured.

- ResourceGovernorResourcePools Collects data from sys.dm_resource_governor_resource_pools, only if workload groups have been configured.

Azure DB Only:

- AzureDBElasticPoolResourceStats Collects data from sys.elastic_pool_resource_stats

- AzureDBResourceStats Collects data from sys.dm_db_resource_stats

Every Hour

- ServerProperties Various SERVERPROPERTY() function calls to get server property information.

- Databases Collect data from sys.databases

- SysConfig Collect data from sys.configurations

- Drives (When not collected via WMI) Drive collection is done via WMI if possible as this method can collect data from all volumes. The SQL collection method only collects drive capacity and free space for volumes that contain database files.

- DBFiles Collects data from sys.database_files for every database. Uses sys.master_files to collect data for databases that are not accessible.

- Backups Get’s the last backup of each type for every database from msdb.dbo.backupset

- LogRestores Collects the last log file restored for each database

- ServerExtraProperties Collects server level data from various sources. Some data collections require SysAdmin permissions and xp_cmdshell - these will be skipped if not available. e.g. Processor name, power plans & more

- DBConfig Collect data from sys.database_scoped_configurations

- Corruption Collect data from msdb.dbo.suspect_pages, msdb.sys.dm_db_mirroring_auto_page_repair & msdb.sys.dm_hadr_auto_page_repair

- OSInfo Collect data from sys.dm_os_sys_info

- TraceFlags Gets trace flags that are enabled globally with DBCC TRACESTATUS(-1)

- DBTuningOptions Returns data from sys.database_automatic_tuning_options for each database

- LastGoodCheckDB Note: This collection requires SysAdmin permissions

- Alerts Collect data from msdb..sysalerts

- CustomChecks Add your own checks to DBA Dash.

- DatabaseMirroring Collect data from sys.database_mirroring

- Jobs Collects metadata for SQL Agent jobs including a DDL snapshot using SMO. A lightweight check is run every hour to see if any jobs have been modified since the last collection. If any jobs have been modified, the collection will run. The lightweight check won’t detect some changes like changes to job schedules. After 24hrs, the collection is run even if no modification to jobs is detected.

Azure DB Only:

- AzureDBServiceObjectives Collects data from sys.database_service_objectives

- AzureDBResourceGovernance Collects data from sys.dm_user_db_resource_governance

Daily @ Midnight

- ServerPrincipals Collects data from sys.server_principals

- ServerRoleMembers Collects data from sys.server_role_members

- ServerPermissions Collects data from sys.server_permissions.

- DatabasePrincipals Collects data from sys.database_principals for each database

- DatabaseRoleMembers Collects data from sys.database_role_members for each database

- DatabasePermissions Collects data from sys.database_permissions for each database

- VLF Gets the Virtual Log File Count for each database.

- DriversWMI Collects driver information from Win32_PnPSignedDriver via WMI.

- OSLoadedModules Collects data from sys.dm_os_loaded_modules - can be used to check if antivirus has loaded into SQL Server address space

- ResourceGovernorConfiguration Scripts resource governor configuration using SMO

- DatabaseQueryStoreOptions Collects data from sys.database_query_store_options for each database

- IdentityColumns Collects last identity value and row count for tables with identity values exceeding the capture threshold for % used

Daily @ 11pm

- Database Schema Snapshots (Not enabled by default) Creates a schema snapshot of databases using SMO. This only runs for the databases listed in SchemaSnapshotDBs - schema snapshots won’t run unless this option has been set. See here for more info.

Disabled by default

TableSize Table row count and disk space usage

FailedLogins Collects failed login information from the SQL Server error log

Schedule Customization

The application has a default schedule listed above which aims to provide a good balance for most instances. If you increase the frequency of data collection, you will increase the monitoring impact and it could also increase the size of your DBA Dash repository database. Less frequent collection could mean that the data is stale or doesn’t provide enough granularity for performance troubleshooting. If you need to adjust the default schedule to better meet your needs, this can be done using the DBA Dash Service Config tool. In the Options tab click “Configure Schedule”.

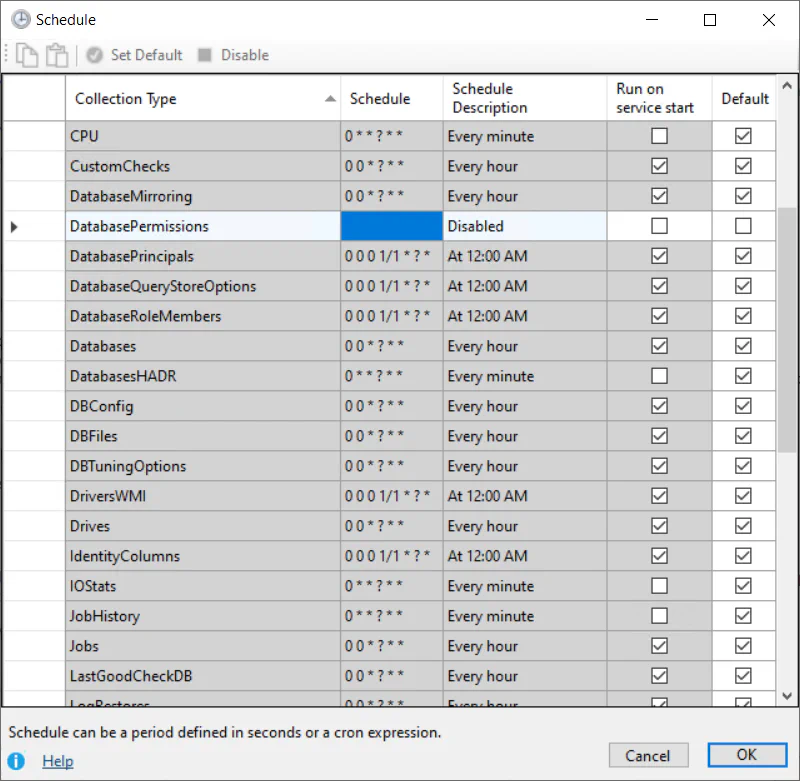

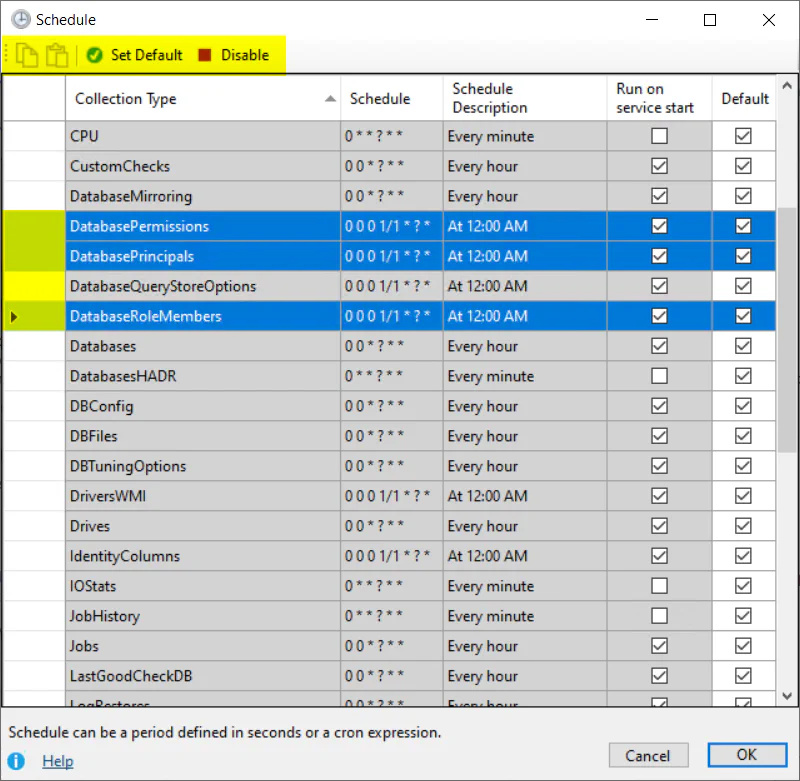

If you want to override the default schedule, uncheck “Default” and enter a string with your own schedule. The string can either be a cron expression or a duration specified in seconds. To disable a collection, use a blank string.

There is also the option to configure the collection to run on service start. Restarting the service is an option if you need to manually refresh data before the scheduled collection runs.

Collections that share the same schedule will be collected together. For example, CPU, performance counters, waits etc might all share the same 1min collection schedule. Every 1min, a job will be triggered to collect this data. The collections are run serially rather than separate triggers firing at the same time for every collection. A separate job is used for each monitored SQL instance and each schedule - collections for different instances will run in parallel. Any collections that are not applicable for the instance type will be skipped. For example, Azure DB collections are skipped for regular SQL Instances. Agent job collections are skipped for Azure DB. Availability group collections are skipped if HADR isn’t enabled.

The schedule data is saved in the ServiceConfig.json for any collections that you have overridden from the default values. The application defaults are configured in this source file.

Bulk Edits

To make it easier to apply updates to a group of schedules you can use the bulk edit features. Use the row headers (highlighted above) to select the full row. Select a single row to copy the schedule for that row. Select the collections you want to update to have the same schedule and click paste. There are also options to reset back to the default schedules or to disable the selected collections.

Per Instance customization

If you configure the schedule in the options tab it will apply to all the monitored SQL instances for that agent. If you want to adjust the schedule for a specific instance, click the “Source” tab. In the “Existing Connections” grid, click the “Schedule” link to edit the schedule for a specific instance. Any collections you don’t override the schedule for will be inherited from the agent level configuration described earlier or from the built in application default values.

Cron Expressions

Schedules can be configured in DBA Dash using cron expressions or you can enter a value in seconds.

Examples

Cron:

0 * * ? * *Every 1min0 0/5 * ? * * *Every 5min0 0/10 * ? * * *Every 10min0 0/15 * ? * * *Every 15min0 0/30 * ? * * *Every 30min0 0 * ? * *Every hour0 0 0/2 ? * *Every 2hrs0 0 0 1/1 * ? *12am0 0 23 1/1 * ? *11pm

Time in seconds:

300Every 5min (300 seconds)60Every 1min (60 seconds)30Every 30 seconds

(Execution times will vary based off when the service is started. Use a cron expression if you need more control over the actual execution time)

Cron expressions might seem cryptic but they provide a lot of flexibility for scheduling. DBA Dash uses Quartz.NET for scheduling which provides some good documentation on cron expressions. The java version of Quartz also has a good cron trigger tutorial. Or you can learn more on the wiki page. There are also some useful online cron expression generators if you need a complex schedule but don’t have time to learn the syntax.

Note: DBA Dash has a “Schedule Description” column that you can use to validate your cron expression.

Cron Generators

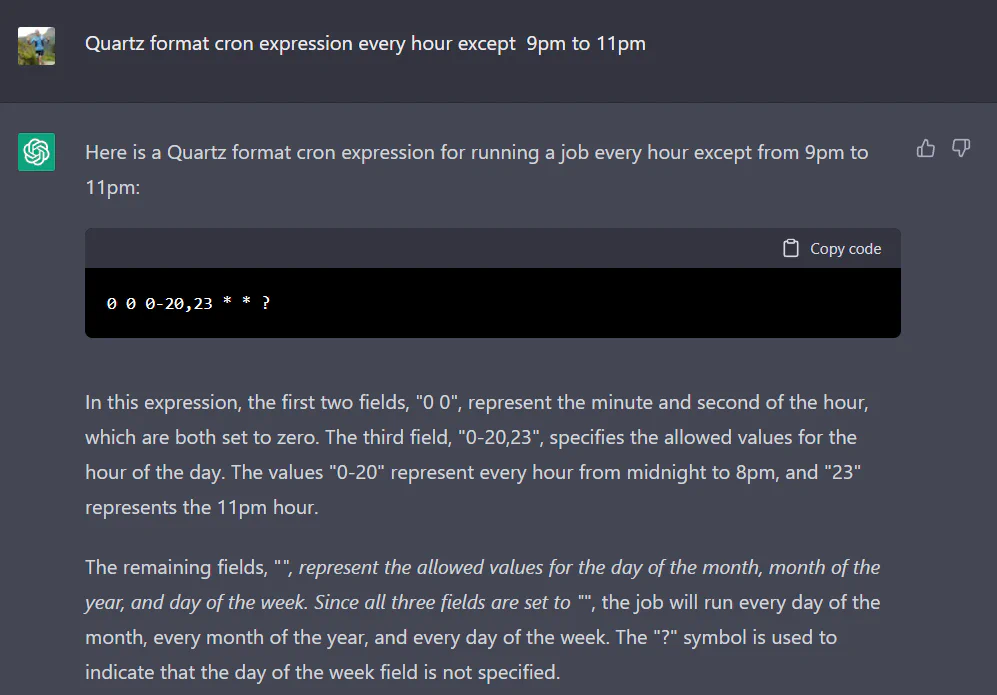

Also try ChatGPT:

Tip

Include ‘Quartz format cron expression’ as this should increase the chances of generating a compatible cron expression. DBA Dash uses Quartz.NET for scheduling.